Explore the key components you’ll need to build an entire Cloud Foundry architecture.

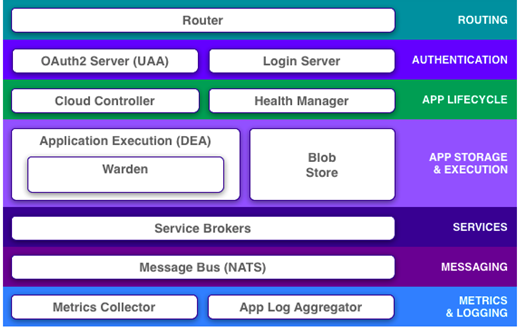

In a recent post, I spoke about some of Cloud Foundry‘s main features. Now I will explore in greater depth the components you’ll need to build an entire Cloud Foundry architecture. This is a good representation of Cloud Foundry’s major moving parts:

Router

Once an application is deployed in Cloud Foundry, all external system and application traffic is controlled and directed through the router. The router maintains a dynamic route table for all applications deployed in a load balanced environment, so you don’t need to worry about updating routing information to reflect changes to a deployed application or the underlying DEAs (which we’ll discuss soon). You can also configure Router for high availability, defining the number of routers you’ll require to support a load balanced Cloud Foundry environment.

In short, Router is responsible for handling your application load in as efficient a way as possible, by reducing the overhead of maintaining routing tables and complex port configurations.

User Account and Authentication (UAA) and Login server

The UAA and Login server manage Cloud Foundry’s authentication mechanism – a kind of identity management service. UAA is an OAuth2 token-issuing server that allows authentication on behalf of the resource owner (also known as User) between client applications and the Authorization server.

Droplet Execution Engine (DEA)

A DEA is the core of Cloud Foundry functionality. But before going deeper into DEA itself, it’s worth mentioning some of the tools that help DEA achieve its goal.

Buildpacks

Buildpacks are the scripts through which Cloud Foundry identifies the required runtime or framework for the application. Buildpacks are responsible for identifying an application’s related dependencies based on user-provided artifacts. They will then ensure everything is properly downloaded and configured.

So imagine that you want to push a WAR file to a Cloud Foundry environment. Buildpacks are smart enough to identify the programming language, framework, and application container you’ll need to properly deploy, and then automatically download everything for you from GitHub. If your application uses a language or framework that Cloud Foundry buildpacks do not support, you can write custom buildpacks.

Droplet

Droplet is the Cloud Foundry unit of execution. Once an application is pushed to Cloud Foundry and deployed using a buildpack, the result is a droplet. A droplet, therefore, is nothing but an abstraction on top of the application that contains information like metadata. Those droplets are stored in blob storage for further deployment processes.

Warden

Once the droplet is ready, it will need hosting in a suitable environment. In Cloud Foundry, this is called a Warden container. Wardens isolate ephemeral and resource-controlled environments.

Now we can properly define that task of a Droplet Execution Agent.

A DEA will select the appropriate buildpack and use that to both stages your application, and ensure complete life cycle management of its application instance. An application instance consists of a droplet and a Warden container. A DEA will continually broadcast the application instance health status to the health manager, which communicates internally to the cloud controller. Requests are directed to the DEA through the cloud controller.

Cloud Controller

You can think of the cloud controller as the brains of a Cloud Foundry environment, as it manages the entire application life cycle. Hold on: didn’t I just say in the previous section that it’s the DEA that manages the life cycle of an application instance? So what’s with the cloud controller muscling in on its turf?

Let’s try to clear this up. As soon as the user requests an application deployment via the CLI, the first request goes to the cloud controller. It’s then the controller’s responsibility to redirect the request to the correct DEA available in a pool. The cloud controller will then track application metadata by storing droplets in the blob store. Hence the role played by the cloud controller happens long before the DEA gets involved and the controller will, therefore, have a more detailed view of application deployments.

Service Brokers

Almost all applications depend on some external services like a database or third-party components. In traditional development, we bind those components to an application using property files and store it outside the deployable, so that it can be modified whenever required without affecting the running application code. But that’s not possible when you deploy applications in Cloud Foundry, because applications live in a Warden container, which is not persistent.

Cloud Foundry, therefore, uses service brokers, through which developers can provision and bind a particular service to an application. Service brokers can be used to define the relationship between the application and services like databases. This permits loose coupling between an app and a service. Conceptually, this is no different from traditional implementations, except that, instead of having the developer managing a property file, the platform itself provides the place holder for your service instance properties.

HM9000 – Health Manager

Cloud Foundry is supposed to provide a highly available environment for all deployed applications. The health status of those applications is monitored by the health manager (HM9000). Let’s say that you told the cloud controller that you want ten instances of your application running across the available DEA. It is the responsibility of HM9000 to watch for a mismatch between the desired number of instances (ten) and a number that are actually running. If those numbers don’t match, HM9000 will immediately contact the cloud controller to spin up enough new instances to match the desired number. Cloud controller does that by using the stored droplets for that application in blob storage.

NATS

Up to this point, we’ve discussed how cloud controller redirects requests to DEA or how HM9000 instructs cloud controller. But don’t you want to know how these components interact with each other? I think that’s even more important.

The Cloud Foundry architecture is inspired by the distributed architecture concept, which you can clearly see when examining how components interact. They use NATS, a very light distributed queueing and messaging system. If you are familiar with the Message Bus concept, then you shouldn’t find it too difficult to understand the role of NATS in Cloud Foundry’s architecture.

Metrics and Log Aggregators

Last but not least, we need to discuss metrics and logging. Like any PaaS deployment, it’s not ideal to login directly to Cloud Foundry instances. This does make it difficult to access logs while debugging or troubleshooting an application issue. It’s also impossible to monitor your components using normal tools because it’s not advisable to set up agents on PaaS provisioned instances.

Not to worry, however, as Cloud Foundry has already thought of that. The metrics collector provides the metrics for all the components and that need monitoring, and the application log aggregator streams application logs wherever you tell it to.

So, that’s pretty much it for Cloud Foundry’s major components. This diagram should help you understand the complete flow, from application push to application staging.

There are just a couple of things that may not be direct parts of the Cloud Foundry environment, but are worth discussing:

CLI (Command Line Interface) is an interface to deploy and manage your application in the cloud foundry environment. Cloud Foundry’s CLI documentation can be found here.

BOSH is a tool for deploying all the components we’ve discussed above in distributed nodes. BOSH orchestrates the deployment process of a distributed system. Detailed documentation on BOSH can be found here.

I hope these two posts have helped you better understand the design, purpose, and function of Cloud Foundry. Please feel free to leave your feedback in the comments.