Is it possible to create an S3 FTP file backup/transfer solution, minimizing associated file storage and capacity planning administration headache?

FTP (File Transfer Protocol) is a fast and convenient way to transfer large files over the Internet. You might, at some point, have configured an FTP server and used block storage, NAS, or an SAN as your backend. However, using this kind of storage requires infrastructure support and can cost you a fair amount of time and money.

Could an S3 FTP solution work better? Since AWS’s reliable and competitively priced infrastructure is just sitting there waiting to be used, we were curious to see whether AWS can give us what we need without the administration headache.

Updated 14/Aug/2019 – streamlined instructions and confirmed that they are still valid and work.

Why S3 FTP?

Amazon S3 is reliable and accessible, that’s why. Also, in case you missed it, AWS just announced some new Amazon S3 features during the last edition of re:Invent.

- Amazon S3 provides infrastructure that’s “designed for durability of 99.999999999% of objects.”

- Amazon S3 is built to provide “99.99% availability of objects over a given year.”

- You pay for exactly what you need with no minimum commitments or up-front fees.

- With Amazon S3, there’s no limit to how much data you can store or when you can access it.

- Last but not least, you can always optimize Amazon S3’s performance.

NOTE: FTP is not a secure protocol and should not be used to transfer sensitive data. You might consider using the SSH File Transfer Protocol (sometimes called SFTP) for that.

Using S3 FTP: object storage as filesystem

SAN, iSCSI, and local disks are block storage devices. That means block storage volumes that are attached directly to an machine running an operating system that drives your filesystem operations. But S3 is built for object storage. This mean interactions occur at the application level via an API interface, meaning you can’t mount S3 directly within your operating system.

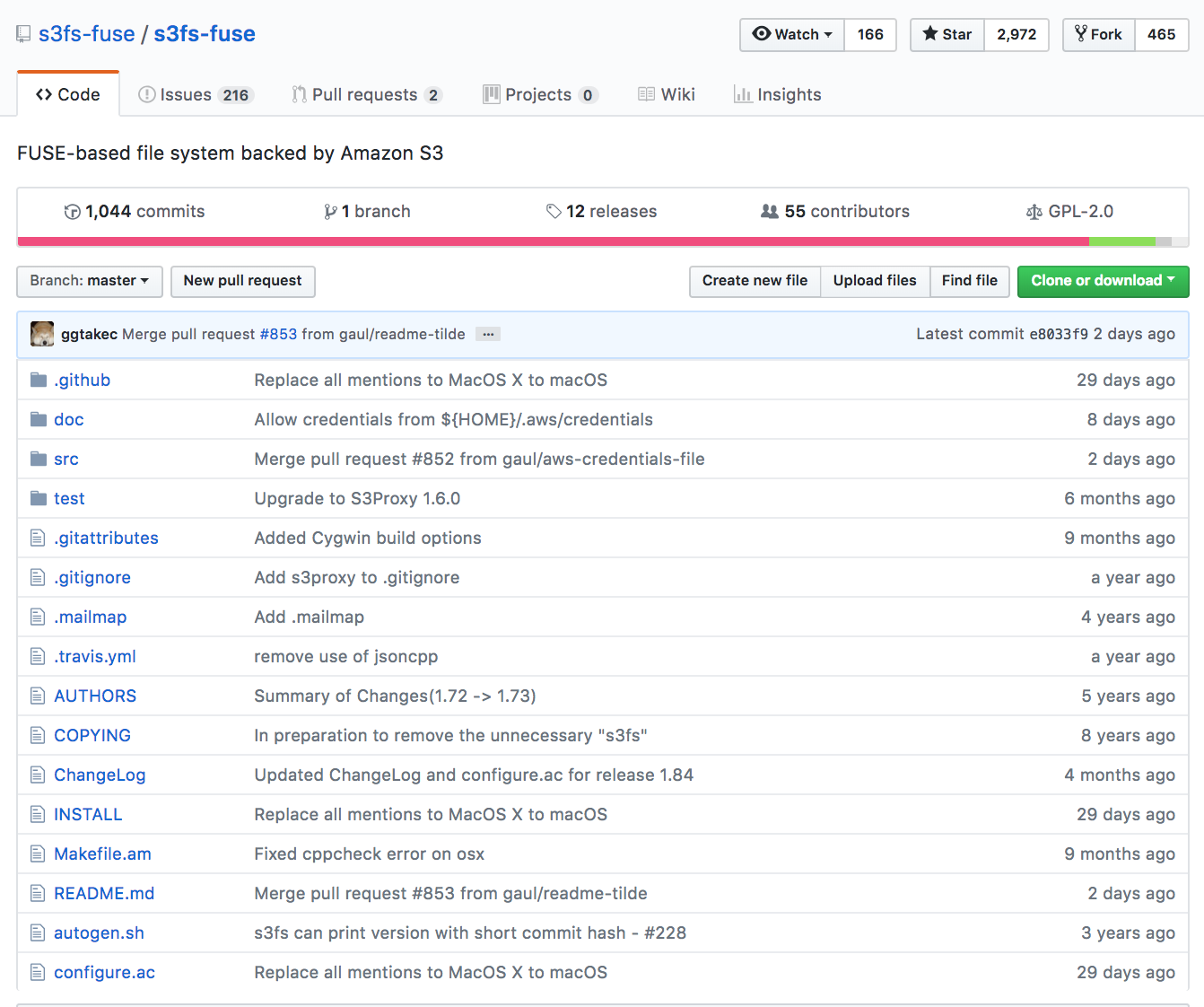

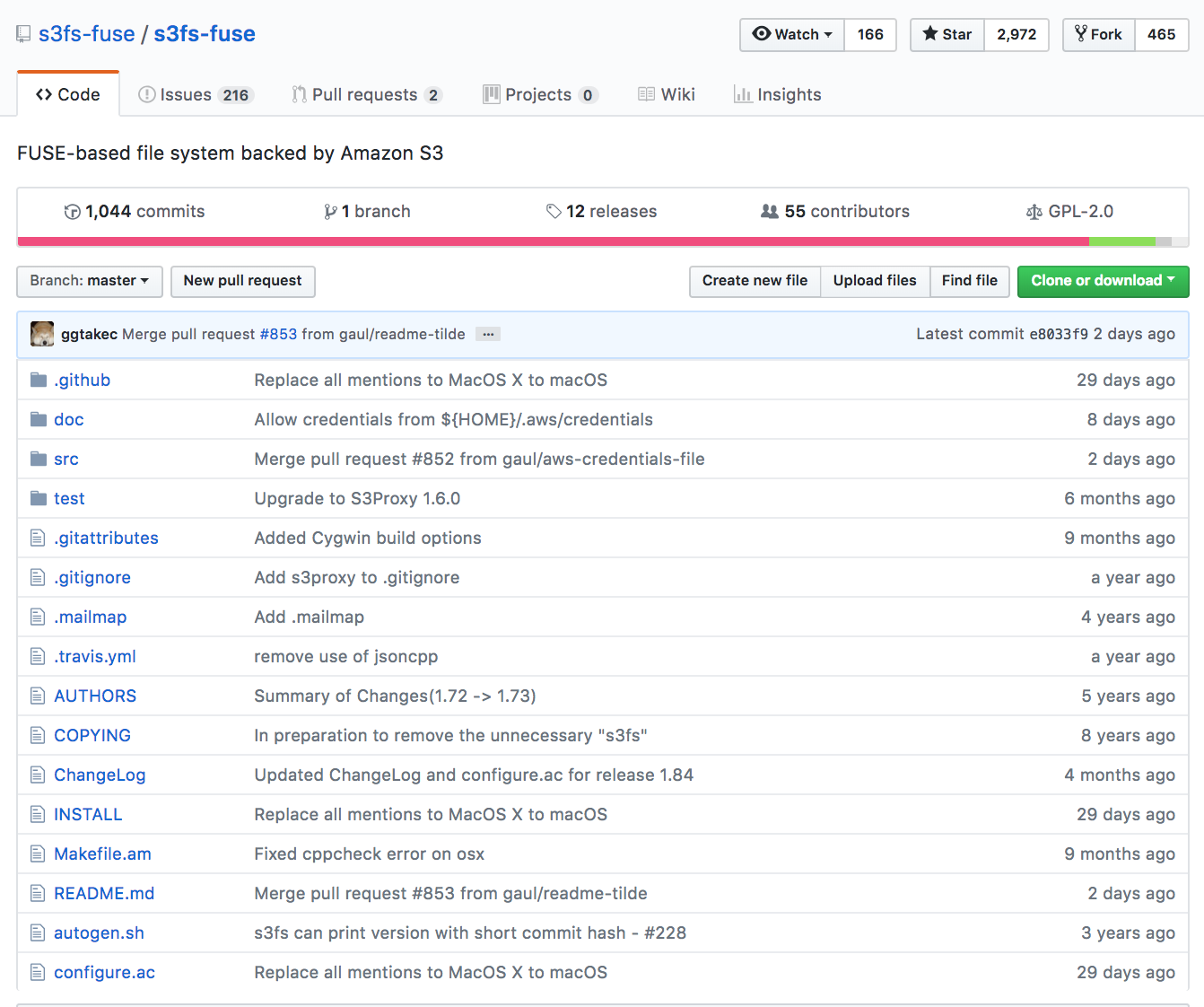

S3FS To the Rescue

S3FS-Fuse will let us mount a bucket as a local filesystem with read/write access. On S3FS mounted files systems, we can simply use cp, mv, and ls – and all the basic Unix file management commands – to manage resources on locally attached disks. S3FS-Fuse is a FUSE based file system that enables fully functional filesystems in a userspace program.

So it seems that we’ve got all the pieces for an S3 FTP solution. How will it actually work?

S3FTP Environment Settings

In this documented setup the following environmental settings have been used. If you deviate from these values, ensure to correctly use the values that you set within your own environment:

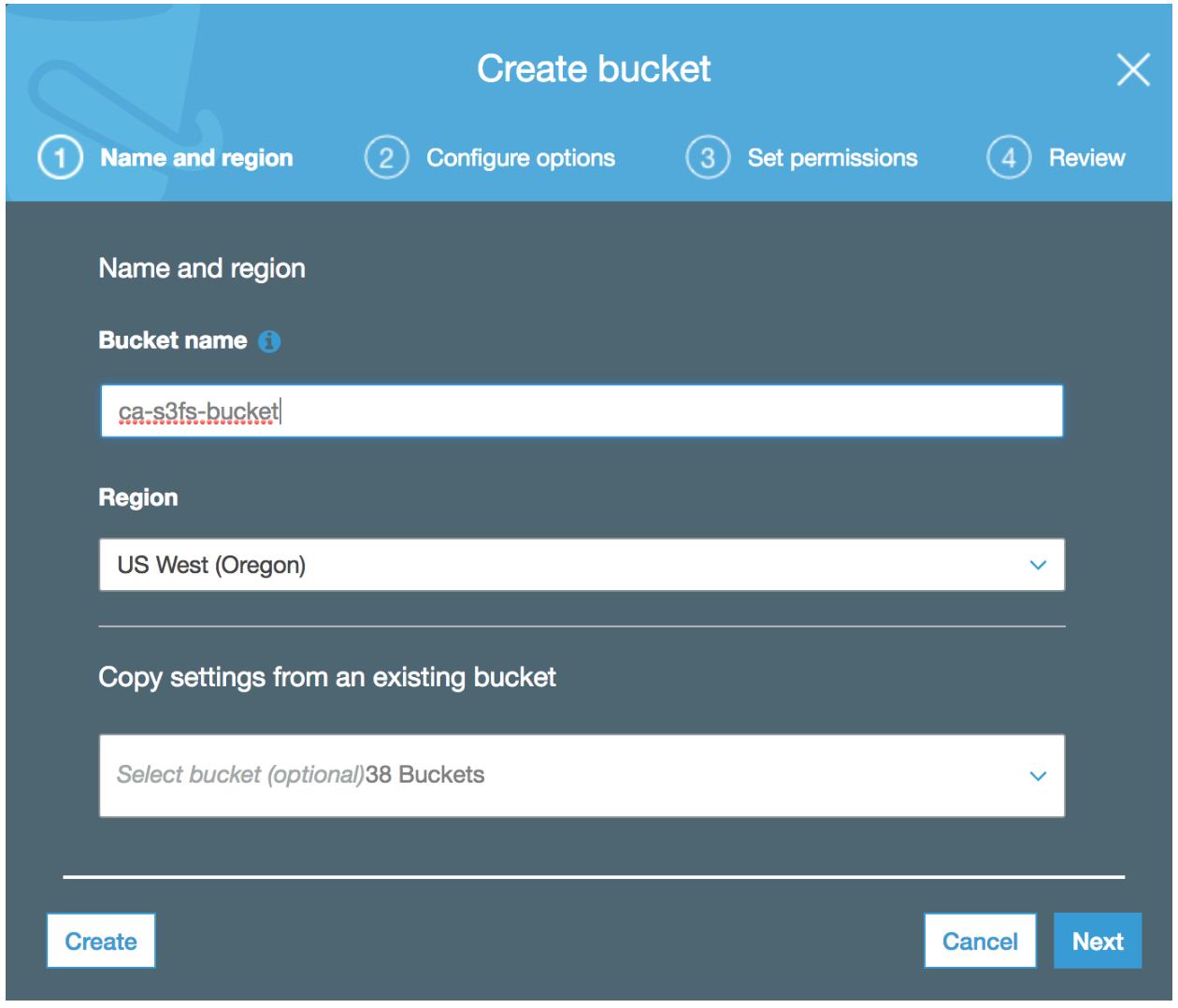

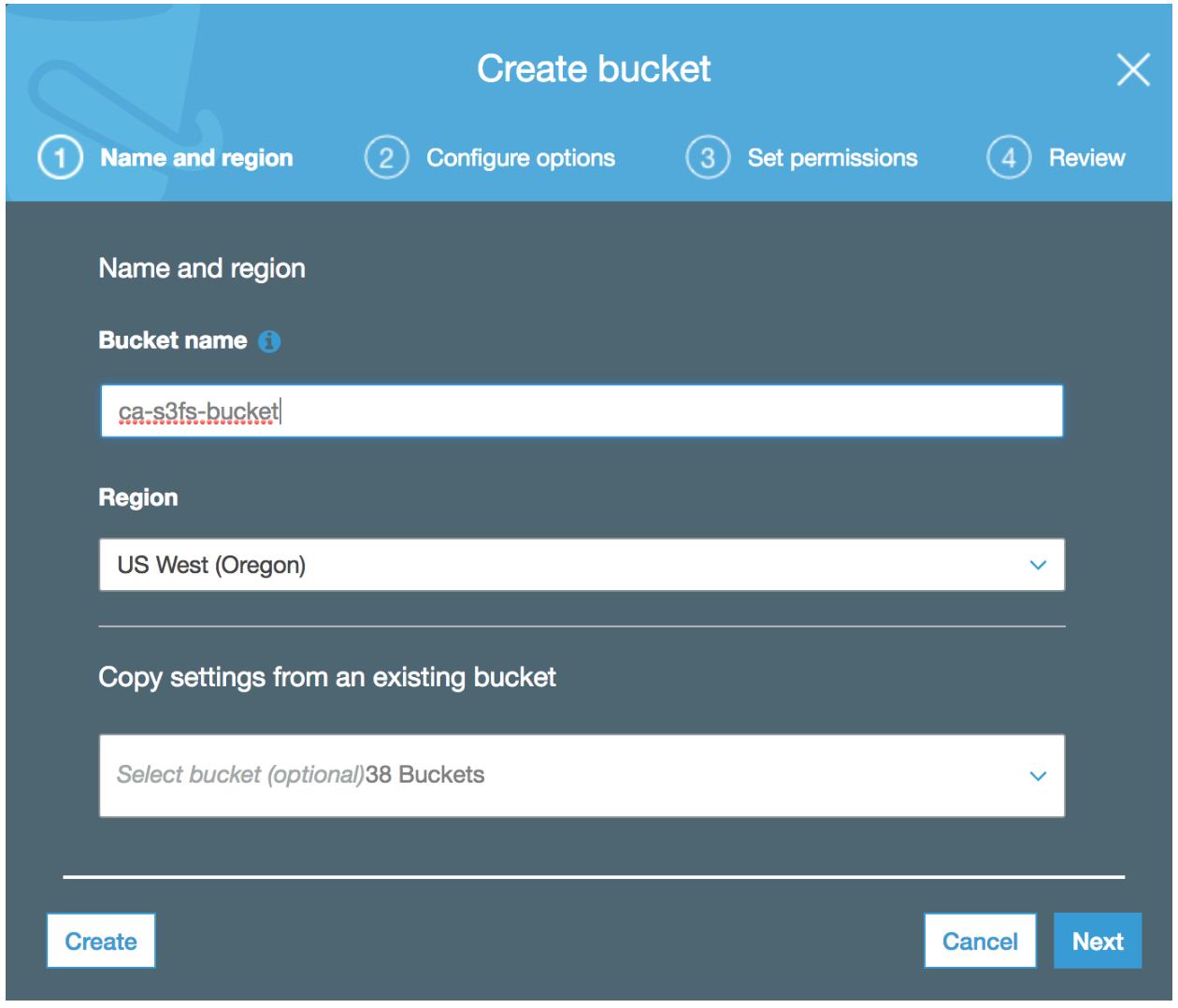

- S3 Bucket Name: ca-s3fs-bucket (you’ll need to use your own unique bucket name)

- S3 Bucket Region: us-west-2

- S3 IAM Policy Name: S3FS-Policy

- EC2 IAM Role Name: S3FS-Role

- EC2 Public IP Address: 18.236.230.74 (yours will definitely be different)

- FTP User Name: ftpuser1

- FTP User Password: your-strong-password-here

Note: The remainder of this setup assumes that the S3 bucket and EC2 instance are deployed/provisioned in the same AWS Region.

S3FTP Installation and Setup

Step 1: Create an S3 Bucket

First step is to create an S3 bucket which will be the end location for our FTP uploaded files. We can do this simply by using the AWS console:

Step 2: Create an IAM Policy and Role for S3 Bucket Read/Write Access

Next, we create an IAM Policy and Role to control access into the previously created S3 bucket.

Later on, our EC2 instance will be launched with this role attached to grant it read and write bucket permissions. Note, it is very important to take this approach with respect to granting permissions to the S3 bucket, as we want to avoid hard coding credentials within any of our scripts and/or configuration later applied to our EC2 FTP instance.

We can use the following AWS CLI command and JSON policy file to perform this task:

aws iam create-policy \ --policy-name S3FS-Policy \ --policy-document file://s3fs-policy.json

Where the contents of the s3fs-policy.json file are:

Note: the bucket name (ca-s3fs-bucket) needs to be replaced with the S3 bucket name that you use within your own environment.

{ "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Action": ["s3:ListBucket"], "Resource": ["arn:aws:s3:::ca-s3fs-bucket"] }, { "Effect": "Allow", "Action": [ "s3:PutObject", "s3:GetObject", "s3:DeleteObject" ], "Resource": ["arn:aws:s3:::ca-s3fs-bucket/*"] } ] }

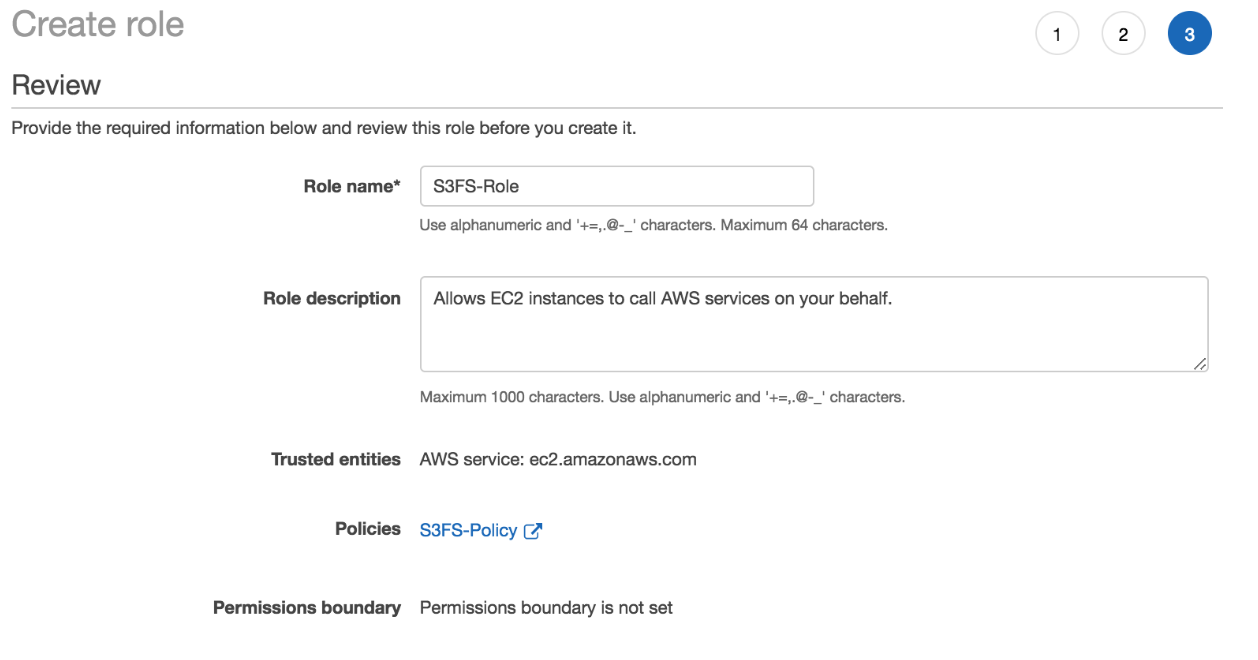

Using the AWS IAM console, we then create the S3FS-Role and attach the S3FS-Policy like so:

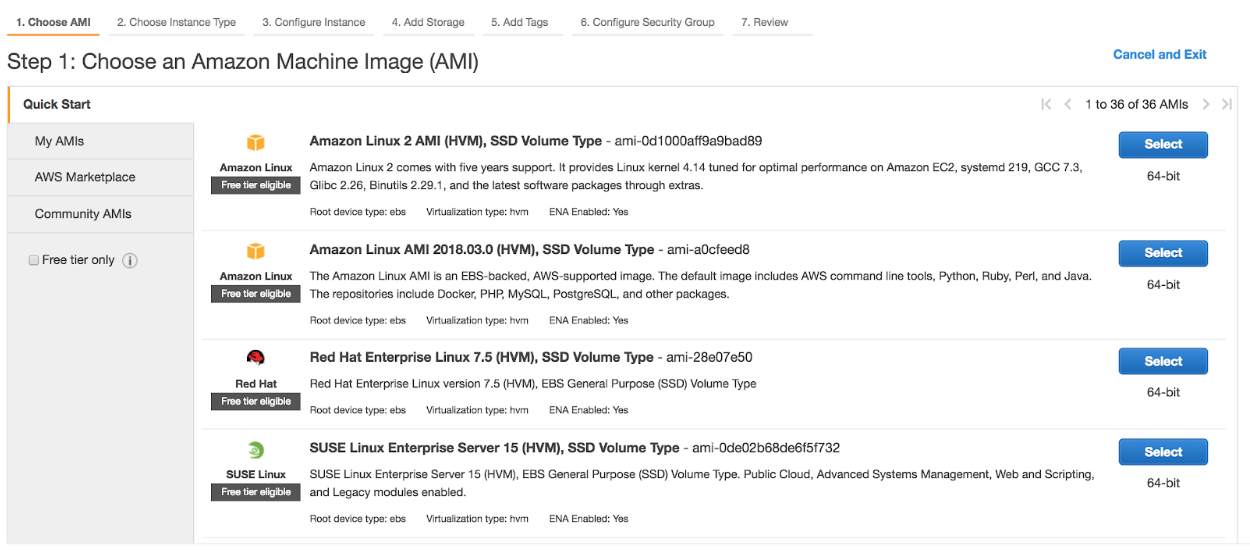

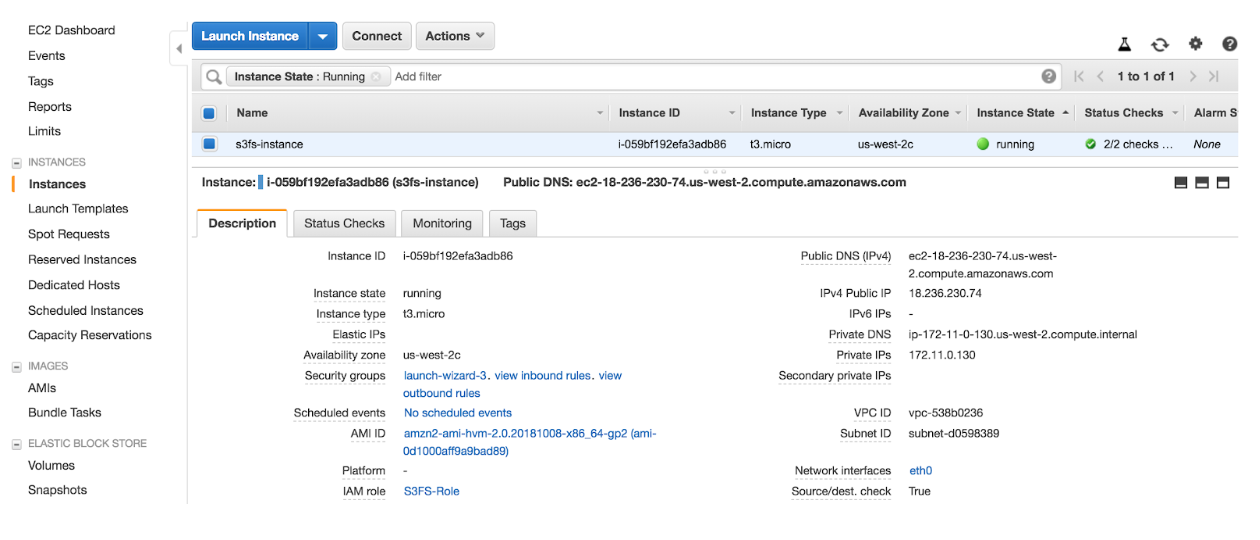

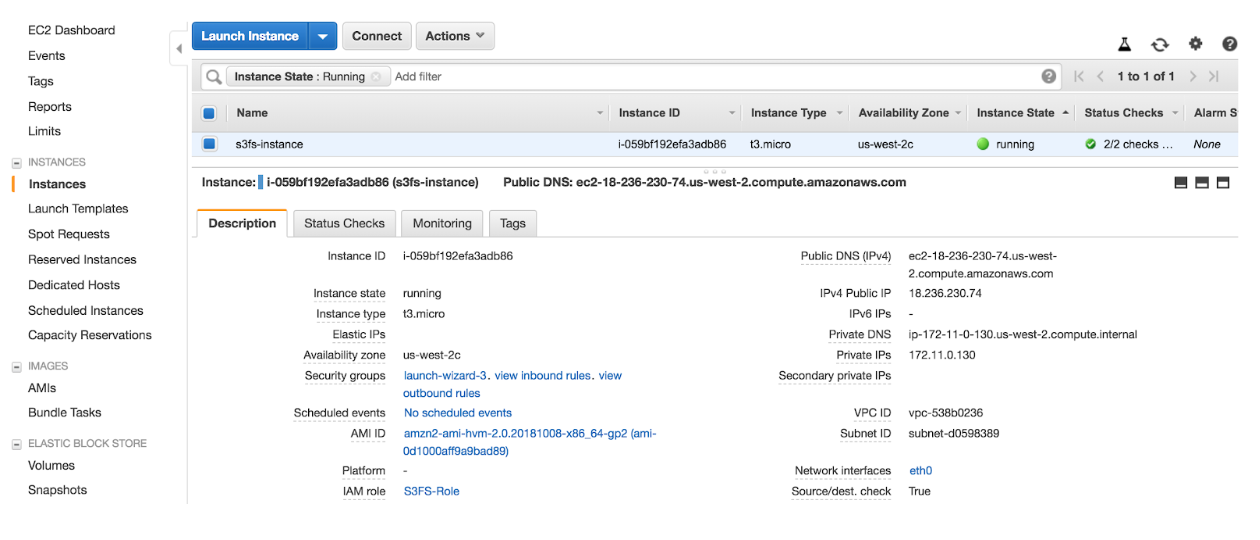

Step 3: Launch FTP Server (EC2 instance – Amazon Linux)

We’ll use AWS’s Amazon Linux 2 for our EC2 instance that will host our FTP service. Again using the AWS CLI we can launch an EC2 instance by running the following command – ensuring that we launch with the S3FS-Role attached.

Note: in this case we are lazily using the –associate-public-ip-address parameter to temporarily assign a public IP address for demonstration purposes. In a production environment we would provision an EIP address, and use this instead.

aws ec2 run-instances \ --image-id ami-0d1000aff9a9bad89 \ --count 1 \ --instance-type t3.micro \ --iam-instance-profile Name=S3FS-Role \ --key-name EC2-KEYNAME-HERE \ --security-group-ids SG-ID-HERE \ --subnet-id SUBNET-ID-HERE \ --associate-public-ip-address \ --region us-west-2 \ --tag-specifications \ 'ResourceType=instance,Tags=[{Key=Name,Value=s3fs-instance}]' \ 'ResourceType=volume,Tags=[{Key=Name,Value=s3fs-volume}]'

Step 4: Build and Install S3FS from Source:

Note: The remainder of the S3 FTP installation as follows can be quickly performed by executing the s3ftp.install.sh script on the EC2 instance that you have just provisioned. The script assumes that the S3 bucket has been created in the Oregon (us-west-2) region. If your setup is different, you can simply update the variables at the top of the script to address differences.

Otherwise, the S3 FTP installation as manually performed…

Next we need to update the local operating system packages and install extra packages required to build and compile the s3fs binary.

sudo yum -y update && \ sudo yum -y install \ jq \ automake \ openssl-devel \ git \ gcc \ libstdc++-devel \ gcc-c++ \ fuse \ fuse-devel \ curl-devel \ libxml2-devel

Download the S3FS source code from GitHub, run the pre-build scripts, build and install the s3fs binary, and confirm s3fs binary is installed correctly.

git clone https://github.com/s3fs-fuse/s3fs-fuse.git cd s3fs-fuse/ ./autogen.sh ./configure make sudo make install which s3fs s3fs --help

Step 5: Configure FTP User Account and Home Directory

We create our ftpuser1 user account which we will use to authenticate against our FTP service:

sudo adduser ftpuser1 sudo passwd ftpuser1

We create the directory structure for the ftpuser1 user account which we will later configure within our FTP service, and for which will be mounted to using the s3fs binary:

sudo mkdir /home/ftpuser1/ftp sudo chown nfsnobody:nfsnobody /home/ftpuser1/ftp sudo chmod a-w /home/ftpuser1/ftp sudo mkdir /home/ftpuser1/ftp/files sudo chown ftpuser1:ftpuser1 /home/ftpuser1/ftp/files

Step 6: Install and Configure FTP Service

We are now ready to install and configure the FTP service, we do so by installing the vsftpd package:

sudo yum -y install vsftpd

Take a backup of the default vsftpd.conf configuration file:

sudo mv /etc/vsftpd/vsftpd.conf /etc/vsftpd/vsftpd.conf.bak

We’ll now regenerate the vsftpd.conf configuration file by running the following commands:

sudo -s EC2_PUBLIC_IP=`curl -s ifconfig.co` cat > /etc/vsftpd/vsftpd.conf << EOF anonymous_enable=NO local_enable=YES write_enable=YES local_umask=022 dirmessage_enable=YES xferlog_enable=YES connect_from_port_20=YES xferlog_std_format=YES chroot_local_user=YES listen=YES pam_service_name=vsftpd tcp_wrappers=YES user_sub_token=\$USER local_root=/home/\$USER/ftp pasv_min_port=40000 pasv_max_port=50000 pasv_address=$EC2_PUBLIC_IP userlist_file=/etc/vsftpd.userlist userlist_enable=YES userlist_deny=NO EOF exit

The previous commands should have now resulted in the following specific set of VSFTP configuration properties:

sudo cat /etc/vsftpd/vsftpd.conf anonymous_enable=NO local_enable=YES write_enable=YES local_umask=022 dirmessage_enable=YES xferlog_enable=YES connect_from_port_20=YES xferlog_std_format=YES chroot_local_user=YES listen=YES pam_service_name=vsftpd userlist_enable=YES tcp_wrappers=YES user_sub_token=$USER local_root=/home/$USER/ftp pasv_min_port=40000 pasv_max_port=50000 pasv_address=X.X.X.X userlist_file=/etc/vsftpd.userlist userlist_deny=NO

Where X.X.X.X has been replaced with the public IP address assigned to your own EC2 instance.

Before we move on, consider the following firewall requirements that will need to be in place to meet the requirements of the VSFTP configuration as per the properties we have just saved into the vsftpd.conf file:

- This configuration is leveraging passive ports (40000-50000) for the actual FTP data transmission. You will need to allow outbound initiated connections to both the default FTP command port (21) and the passive port range (40000-50000).

- The FTP EC2 instance security group will need to be configured to allow inbound connections to the ports above, and where the source IP address of the inbound FTP traffic as generated by your FTP client is your external public IP address.

Since we are configuring a user list file, we need to add our ftpuser1 user account into the vsftpd.userlist file:

echo "ftpuser1" | sudo tee -a /etc/vsftpd.userlist

Finally, we are ready to startup the FTP service, we do so by running the command:

sudo systemctl start vsftpd

Let’s check to ensure that the FTP service started up and is running correctly:

sudo systemctl status vsftpd

● vsftpd.service - Vsftpd ftp daemon

Loaded: loaded (/usr/lib/systemd/system/vsftpd.service; disabled; vendor preset: disabled)

Active: active (running) since Tue 2019-08-13 22:52:06 UTC; 29min ago

Process: 22076 ExecStart=/usr/sbin/vsftpd /etc/vsftpd/vsftpd.conf (code=exited, status=0/SUCCESS)

Main PID: 22077 (vsftpd)

CGroup: /system.slice/vsftpd.service

└─22077 /usr/sbin/vsftpd /etc/vsftpd/vsftpd.conf

Step 7: Test FTP with FTP client

Ok so we are now ready to test our FTP service – we’ll do so before we add the S3FS mount into the equation.

On a Mac we can use Brew to install the FTP command line tool:

brew install inetutils

Let’s now authenticate against our FTP service using the public IP address assigned to the EC2. In this case the public IP address I amusing is: 18.236.230.74 – this will be different for you. We authenticate using the ftpuser1 user account we previously created:

ftp 18.236.230.74 Connected to 18.236.230.74. 220 (vsFTPd 3.0.2) Name (18.236.230.74): ftpuser1 331 Please specify the password. Password: 230 Login successful. ftp>

We need to ensure we are in passive mode before we perform the FTP put (upload). In this case I am uploading a local file named mp3data – again this will be different for you:

ftp> passive Passive mode on. ftp> cd files 250 Directory successfully changed. ftp> put mp3data 227 Entering Passive Mode (18,236,230,74,173,131). 150 Ok to send data. 226 Transfer complete. 131968 bytes sent in 0.614 seconds (210 kbytes/s) ftp> ftp> ls -la 227 Entering Passive Mode (18,236,230,74,181,149). 150 Here comes the directory listing. drwxrwxrwx 1 0 0 0 Jan 01 1970 . dr-xr-xr-x 3 65534 65534 19 Oct 25 20:17 .. -rw-r--r-- 1 1001 1001 131968 Oct 25 21:59 mp3data 226 Directory send OK. ftp>

Lets now delete the remote file and then quit the FTP session

ftp> del mp3data ftp> quit

Ok that looks good!

We are now ready to move on and configure the S3FS mount…

Step 8: Startup S3FS and Mount Directory

Run the following commands to launch the s3fs process.

Notes:

- The s3fs process requires the hosting EC2 instance to have the S3FS-Role attached, as it uses the security credentials provided through this IAM Role to gain read/write access to the S3 bucket.

- The following commands assume that the S3 bucket and EC2 instance are deployed/provisioned in the same AWS Region. If this is not the case for your deployment, hardcode the REGION variable to be the region that your S3 bucket resides in.

EC2METALATEST=http://169.254.169.254/latest && \ EC2METAURL=$EC2METALATEST/meta-data/iam/security-credentials/ && \ EC2ROLE=`curl -s $EC2METAURL` && \ S3BUCKETNAME=ca-s3fs-bucket && \ DOC=`curl -s $EC2METALATEST/dynamic/instance-identity/document` && \ REGION=`jq -r .region <<< $DOC`

echo "EC2ROLE: $EC2ROLE" echo "REGION: $REGION"

sudo /usr/local/bin/s3fs $S3BUCKETNAME \

-o use_cache=/tmp,iam_role="$EC2ROLE",allow_other /home/ftpuser1/ftp/files \

-o url="https://s3-$REGION.amazonaws.com" \

-o nonempty

Lets now do a process check to ensure that the s3fs process has started:

ps -ef | grep s3fs root 12740 1 0 20:43 ? 00:00:00 /usr/local/bin/s3fs ca-s3fs-bucket -o use_cache=/tmp,iam_role=S3FS-Role,allow_other /home/ftpuser1/ftp/files -o url=https://s3-us-west-2.amazonaws.com

Looks Good!!

Note: If required, the following debug command can be used for troubleshooting and debugging of the S3FS Fuse mounting process:

sudo /usr/local/bin/s3fs ca-s3fs-bucket \

-o use_cache=/tmp,iam_role="$EC2ROLE",allow_other /home/ftpuser1/ftp/files \

-o dbglevel=info -f \

-o curldbg \

-o url="https://s3-$REGION.amazonaws.com" \

-o nonempty

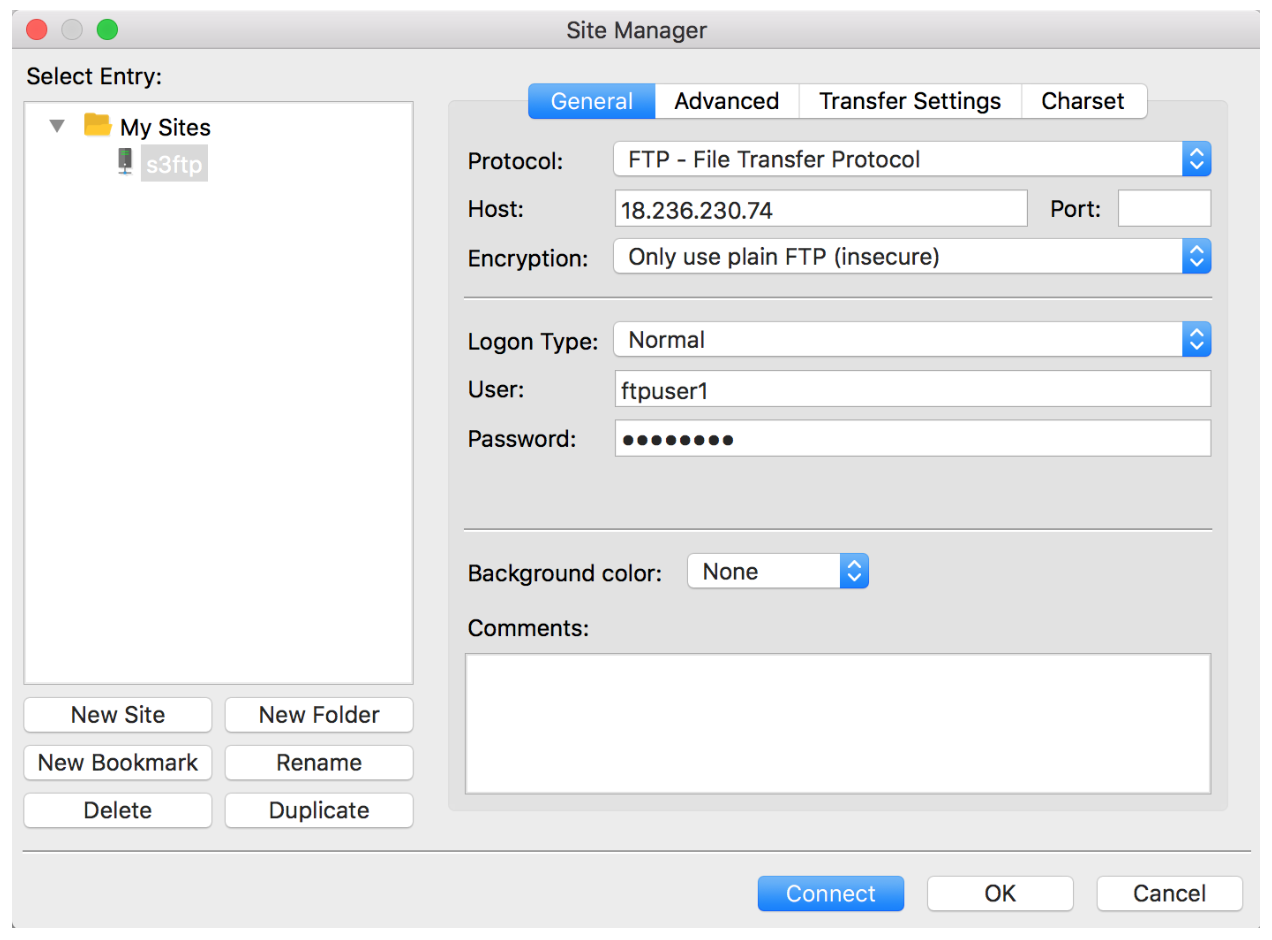

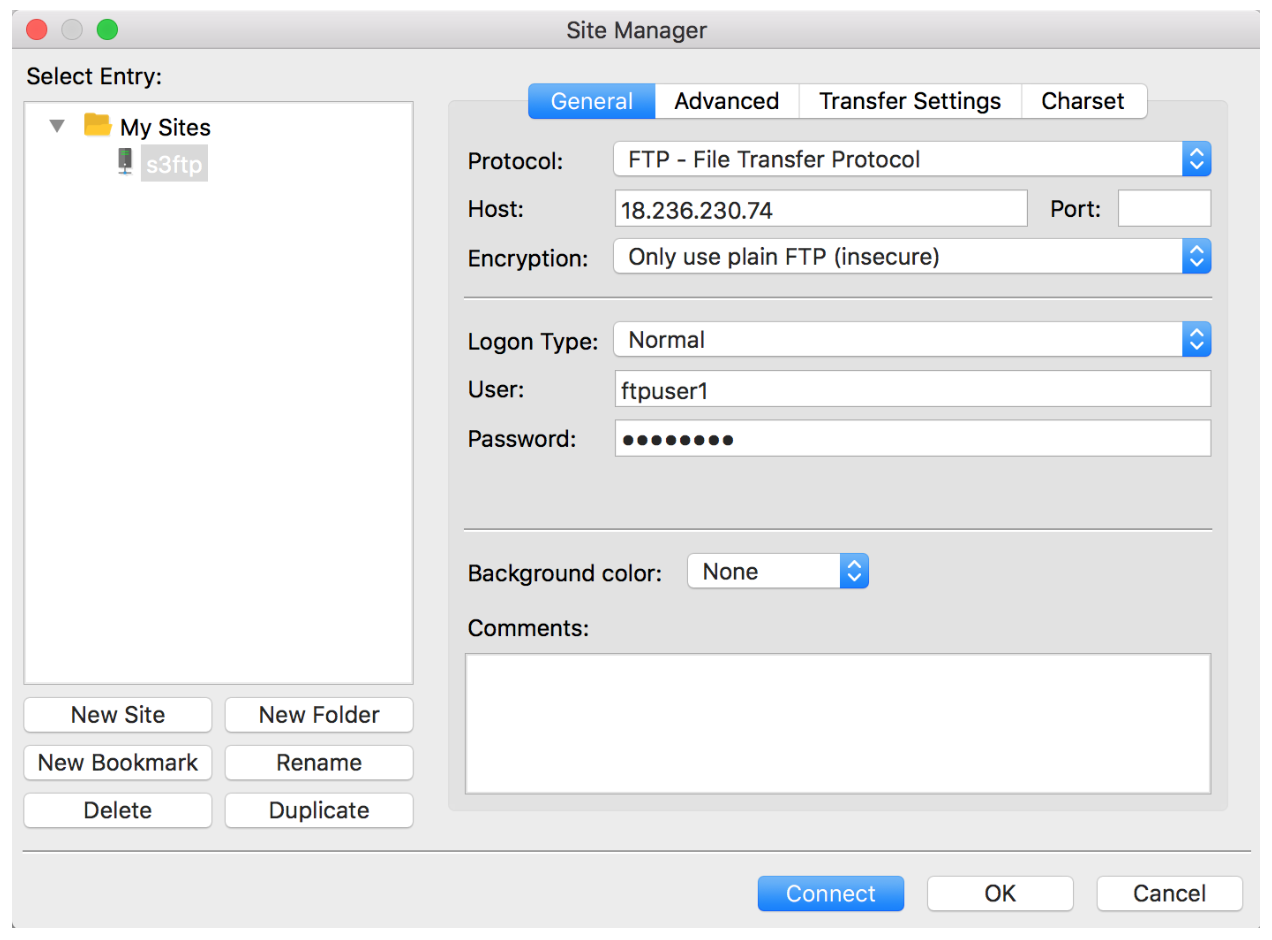

Step 9: S3 FTP End-to-End Test

In this test, we are going to use FileZilla, an FTP client. We use Site Manager to configure our connection. Note here, we explicitly set the encryption option to insecure for demonstration purposes. Do NOT do this in production if transferring sensitive files, instead setup SFTP or FTPS.

With our FTP connection and credential settings in place we can go ahead and connect…

Ok, we are now ready to do an end-to-end file transfer test using FTP. In this example we FTP the mp3data file across by dragging and dropping it from the left hand side to the right hand side into the files directory – and kaboom it works!!

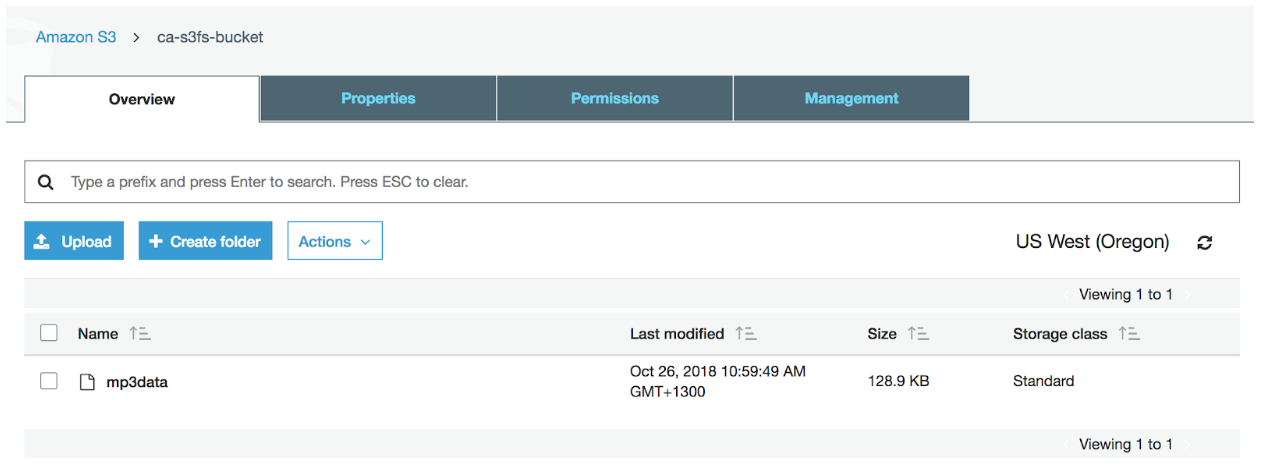

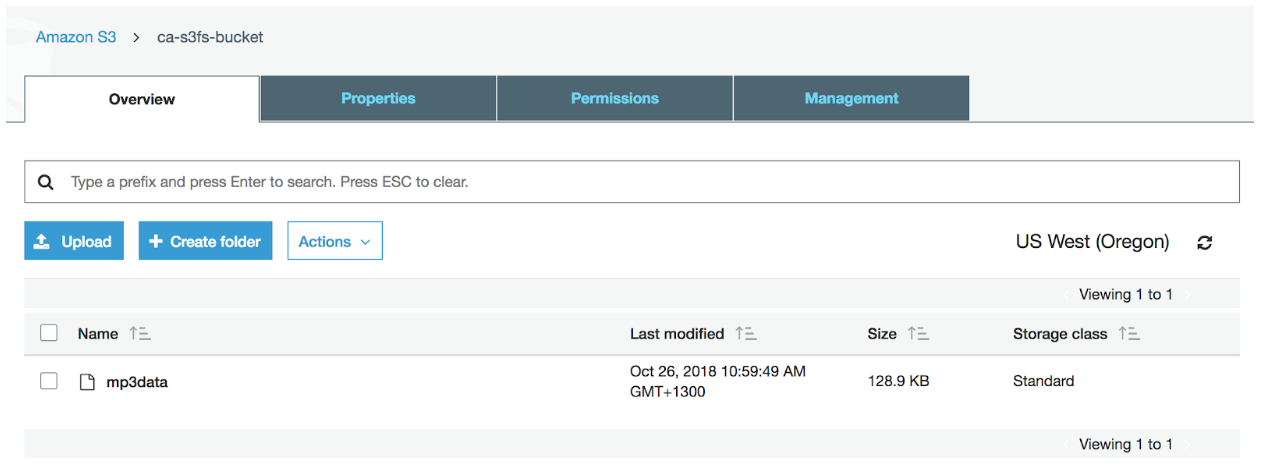

The acid test is to now review the AWS S3 web console and confirm the presence of the mp3data file within the configured bucket, which we can clearly see here:

From now on, any files you FTP into your user directory, will automatically be uploaded and synchronized into the respective Amazon S3 bucket. How cool is that!

Summary

Voila! An S3 FTP server!

As you have just witnessed – we have successfully proven that we can leverage the S3FS-Fuse tool together with both S3 and FTP to build a file transfer solution. Let’s again review the S3 associated benefits of using this approach:

- Amazon S3 provides infrastructure that’s “designed for durability of 99.999999999% of objects.”

- Amazon S3 is built to provide “99.99% availability of objects over a given year.”

- You pay for exactly what you need, with no minimum commitments or up-front fees.

- With Amazon S3, there’s no limit to how much data you can store or when you can access it.

If you want to deepen your understanding of how S3 works, then check out the CloudAcademy course Storage Fundamentals for AWS